Six months into 2020, a buzz emerged across the globe as OpenAI unveiled the latest version of its Generative Pre-trained Transformer, GPT-3, a language model with the ability to produce human-like text on a massive scale.

By March 2021, the platform was producing an average of 4.5 billion words daily through OpenAI’s API which offered developers access to GPT-3.

The release of GPT-3 marked a significant milestone for AI and machine learning as it was the first time that a neural network had been trained to generate natural language at such a large scale. The result was a new benchmark for artificial intelligence.

What is GPT-3?

In simple terms, GPT-3 or ‘Generative Pre-trained Transformer 3’ is a trained language model that can generate natural-sounding text from scratch.

Developed by OpenAI, a non-profit research company based in San Francisco with Elon Musk as a co-founder, GPT-3 is highly specialized in natural language processing (NLP) and is trained on around 45 TB of text including Common Crawl data (encompassing information gathered over eight years of crawling the web) which then passes through 175 billion machine learning parameters.

In contrast, GPT-1, released just two years prior, had 117 million parameters and GPT-2, launched in 2019, had 1.5 billion parameters.

How does GPT-3 work?

The GPT-3 model generates text by drawing from a database of millions of articles, which is then fed through a neural network that learns what words commonly follow each other.

As the old saying goes, the best way to teach a man to fish is to give him a worm instead of a fish! In the same way, GPT-3 is designed to learn. You can give it pre-written examples and it’ll use deep learning to create content in the same way.

What are the benefits of GPT-3 in content creation?

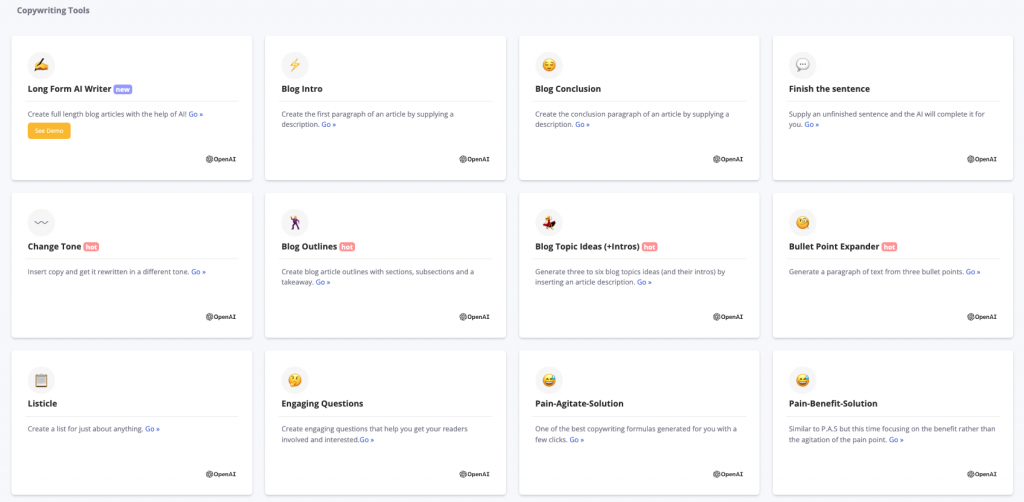

At ContentBot, we use GPT-3 to help you write a wide variety of content

The main advantage of GPT-3 is that it can create large amounts of unique content without needing human intervention. Thus, it can be used for many purposes such as generating blog posts, short stories, product descriptions, poetry, sales pages, emails, product reviews, article outlines, song lyrics, and even books.

Some other pros for content creators include:

- No more ‘writer’s block’ or staring at the blinking cursor waiting for inspiration to strike

- Create fresh short-form and long-form content in a fraction of the time

- High-quality output

- Automate tasks such as writing emails and social media posts

- Get creative with your content and easily switch up your writing style according to your audience

- You’ll never run out of topics to discuss

With GPT-3, the world’s your oyster with the only constraint being your imagination – at least when it comes to creating text-based applications.

There are a plethora of innovative use cases with this AI language model such as chatbots, text-based games, code generation, music production, image autocompletion, and AI multi-language voiceovers, among others.

Here’s a YouTube video showcasing some creative applications built using GPT-3’s API.

Will GPT-3 replace content writers?

The simple answer is no. Content creation tools based on GPT-3 are just meant to help writers save time and aid them in the creative process.

If you expect GPT-3 to replace all of your content needs, you’re going to be highly disappointed.

Simply clicking a button and expecting AI to do the work for you isn’t going to yield a great outcome as the content generated needs guidance, context, fact-checking, proofreading, editing, and human intervention to be written in a way that will resonate with your audience.

Writers are hired for their skill set, knowledge, and achievable results. For example, content marketers are employed to write compelling blogs that convert visitors into leads. They use research, strategy, subject expertise, and a strong understanding of SEO to develop engaging content that ranks well on search engines and converts readers into customers.

So, at the end of the day, GPT-3 is just another tool to help writers do their work more efficiently.

Is GPT-3 generated content unique?

Yes! The majority of generated content is unique. GPT-3 is able to do this because it uses a neural network to learn from previous examples and then applies those lessons to new situations. As such, the output produced by GPT-3 is also readable and similar to what humans would come up with.

However, there might be phrases or sentences that overlap with pre-existing content. Thus, it’s important to always run a plagiarism check before clicking the ‘publish’ button.

What are some concerns surrounding GPT-3 generated content?

As GPT-3 was trained on a wide array of content created by human writers (consider the best and worst of the internet – from CNN and Wikipedia articles to Reddit), it’s natural that many of our human fallacies, biases, and prejudice can creep into the AI-generated content.

Sam Altman, the CEO and co-founder of Open AI, too acknowledged in a tweet that there’s a lot of hype surrounding GPT-3 and that “it still has serious weaknesses and sometimes makes very silly mistakes.”

Studies have also shown that GPT-3 can produce text that is racist, communalist, and sexist in nature. There are also fears that it could be used to spread misinformation if fallen into the hands of the wrong people. Thus, it’s vital to fact-check GPT-3 generated content and exercise caution in using AI to write about sensitive issues like politics, religion, sex, money, drugs, health, and death.

Ethics should be a cornerstone for technology, so if used ethically though, GPT-3 has the power to revolutionize content production.

Will there be a GPT-4?

There’s no reason why not. Going by history, there’s been a new release every year – from GPT-1 in 2018 to GPT-3 in 2020.

And according to a Wired article, Andrew Feldman, the founder of Cerebras, an organization that created the largest chip ever which is used to power AI systems, said, “From talking to OpenAI, GPT-4 will be about 100 trillion parameters.” However, this will likely take several years to develop.

An article by the Medium publication Towards Data Science puts GPT-4’s supposed parameters into perspective, “The brain has around 80–100 billion neurons and around 100 trillion synapses. GPT-4 will have as many parameters as the brain has synapses.”

This will make it 500 times bigger than GPT-3.

That’s massive!

In conclusion, GPT-3 offers a glimpse into how artificial intelligence will positively impact content creation. It’s easy to see where its strengths lie, but we’re only scratching the surface when it comes to the potential applications of GPT-3.

Article written by Annalie Gracias

- Using an AI Blog Writer to Stay Relevant - December 3, 2024

- Level Up Your Brand with Content Marketing Strategies - December 2, 2024

- Content Marketing Tips 2025: What You Need to Know - November 26, 2024

1 thought on “GPT-3 Content: A Complete Guide (2022)”